On the other hand, Informatica provides the excellent Redshift connector that works like a charm. AWS SDK), copy the JAR file to the directory of your choice. DataStage is not very compatible with the AWS ecosystem. Download the Amazon Redshift JDBC driver, version 2.1 Web14. To achieve fast load, you need to build the jobs that do the best Redshift practice ( Loading Data into Redshift), which is not an easy task. Download from the option: JDBC 4.2compatible driver version 2.0 and AWS SDK driverdependent libraries.

Download the dependencies from here : Official Redshift JDBC Driver Page. Inserting or updating records in Redshift through JDBC (or even ODBC) driver with DataStage is extremely slow. So, all we need to do is to add the Redshift dependencies here. If this option is not ticked, it will lock the table and the job hangs. To insert or update records, make sure to tick auto-commit in the JDBC stage. Both can be obtained in the Redshift JBDC driver download page here.ĬLASSPATH: Add the path to the JBDC jar file as in ‘/opt/IBN/InformationServer/Server/DSEngine/3rd-party-drivers/RedshiftJDBC42-1.jar’ĬLASSNAME: Add the class name as in the documentation like ‘’ Hive, Apache Zeppelin, DBeaver, SQL Workbench/J, etc. If you dont have AWS SDK for Java installed, download the ZIP file with JDBC 4.2compatible driver and driver dependent. Curious about what else we offer Check out our free. The config file requires two parameters, ClASSPATH and CLASSNAME. Connect to your Redshift cluster from any Java application, such as Apache Spark, Apache. and Nexus Repository, Sonatype knows that the integrity of your build is critical. (3) Once the driver is moved to the 3rd-party-drivers folder, you need to edit the nfig file in the DSEngine folder. Here are download links for the latest release. See Amazon Redshift JDBC Driver Installation and Configuration Guide for more information. Mv /tmp/RedshiftJDBC42-1.jar /opt/IBM/InformationServer/Server/DSEngine/3rd-party-drivers/ Seamless Data Sharing Using Amazon Redshift Get Started arrowforward. Installation and Configuration of Driver. Amazon Redshift offers drivers for tools that are compatible with the JDBC 4.2 API. You need to check your installation path. Download the Amazon Redshift JDBC driver, version 2.1. In this example, I set the installation path as /opt/IBM/InformationServer/Server/DSEngine/. (2) It is recommended to install the driver to the tmp folder first and move to the 3rd-party-drivers folder. In my experience, the latest version (4.2) was not compatible.

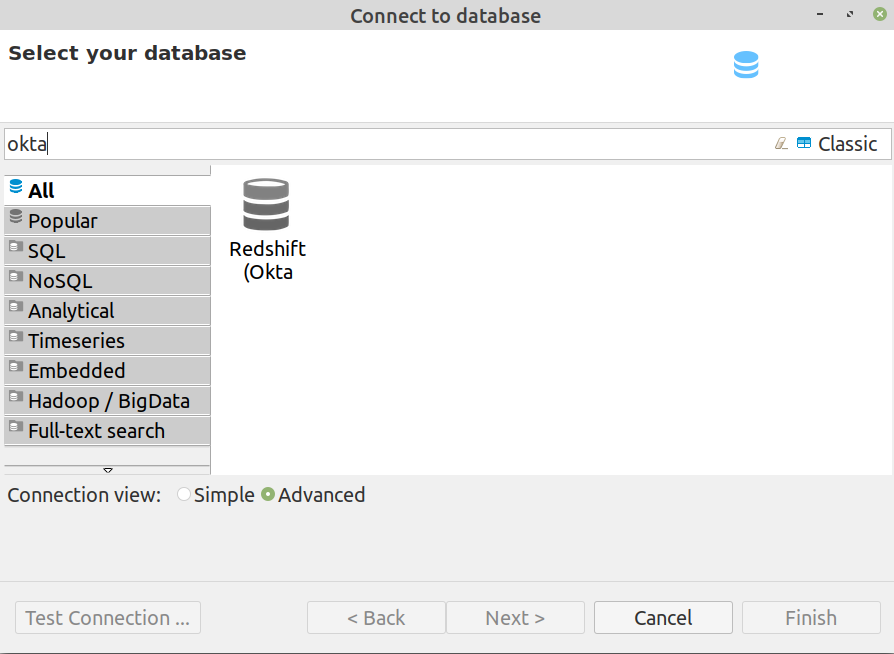

(1) Download Redshift JDBC driver from here. For Amazon EMR releases 6.4.0 through 6.8. With this connector, you can use Spark on Amazon EMR to process data stored in Amazon Redshift. In this post, we will discuss how to add Redshift JDBC driver to DataStage server and configure it. With Amazon EMR release 6.4.0 and later, every release image includes a connector between Apache Spark and Amazon Redshift. This project is licensed under the Apache-2.0 License.In order to use a JDBC driver, you need to download the JDBC and set up the configuration files (see here). gitchangelog.rc when generating the changelog. Changelog GenerationĪn entry in the changelog is generated upon release using gitchangelog. I am on a Mac, so your configuration might be slightly different. It also available on Maven Central, groupId: and artifactId: redshift-jdbc42. I'm late to this question, but I've spent a ton of time trying to get a local instance of pyspark connected to amazon Redshift. Here are download links for the latest release: The jar file is the Redshift JDBC driver.The zip file contains the driver jar file and all required dependencies files to use AWS SDK for the IDP/IAM features. It builds redshift-jdbc42-.zip files under target directory.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed